8 min read

Neural Networks 101: Part 2

Understanding how neural networks learn through activation functions, forward propagation and backpropagation.

What Are Activation Functions

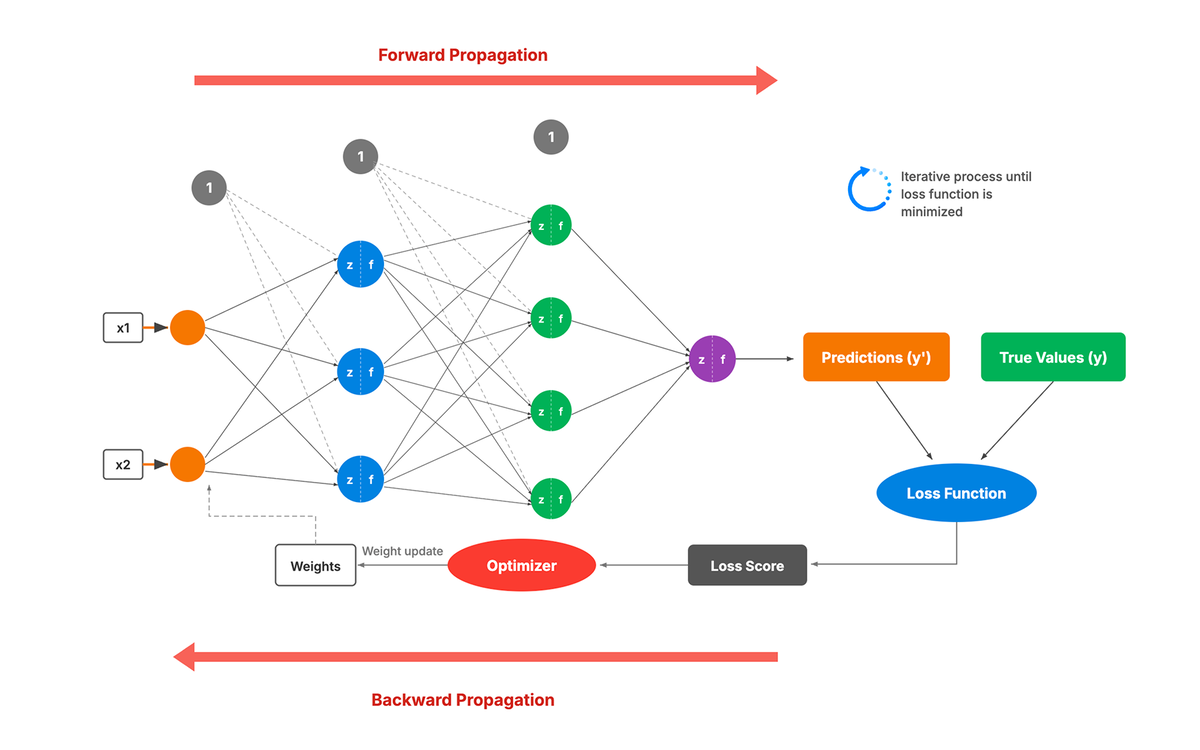

How Data Moves: Forward Propagation Step by Step

Source: fpt

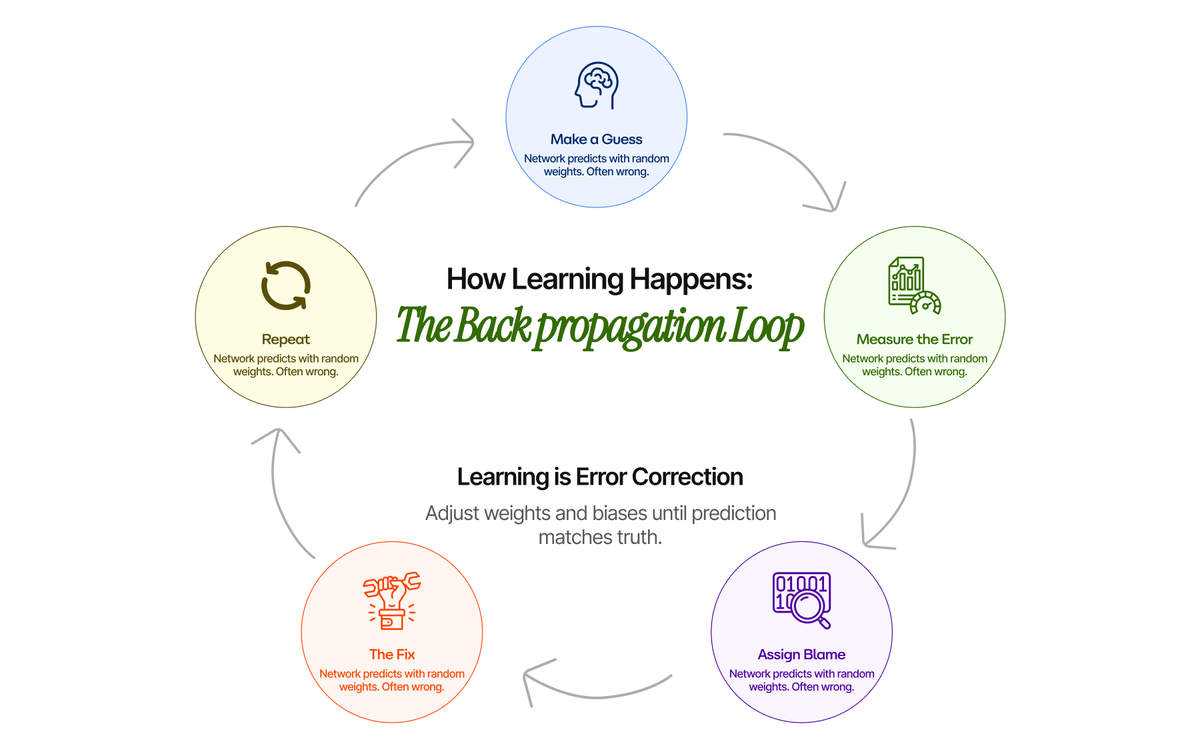

How Learning Happens: Backpropagation in Simple Terms

Training Workflow You Can Envision

What Neural Networks Learn

The Next Step

8 min read

Top 12 AI Newsletters to Follow in 2026

The AI information landscape has never been noisier — or more consequential. These 12 newsletters cut through the noise and deliver what professionals actually need: signal, not hype.

8 min read

Top 10 AI Influencers to Follow on LinkedIn in 2026

The AI landscape shifts daily. For founders, operators, and business leaders, the challenge is no longer finding information — it's filtering it. The difference between a distracted strategy and a competitive advantage often lies in the quality of your information diet. Here are ten AI influencers on LinkedIn in 2026 who consistently deliver signal over noise.